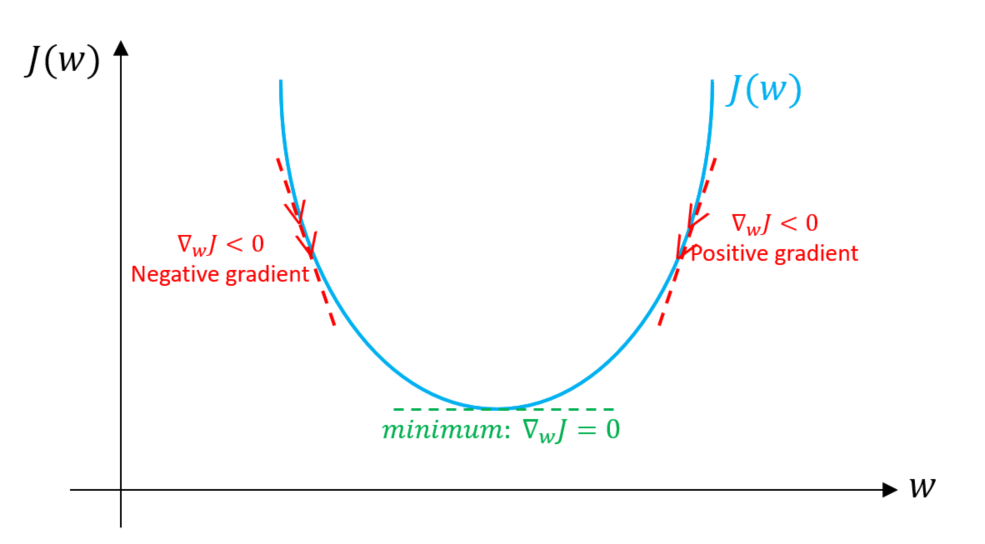

This is because the steepness/slope of the hill, which determines the length of the vector, is less. Note that the gradient ranging from X0 to X1 is much longer than the one reaching from X3 to X4. Think of a gradient in this context as a vector that contains the direction of the steepest step the blindfolded man can take and also how long that step should be. Imagine the image below illustrates our hill from a top-down view and the red arrows are the steps of our climber. This process can be described mathematically using the gradient. As he comes closer to the top, however, his steps will get smaller and smaller to avoid overshooting it. He might start climbing the hill by taking really big steps in the steepest direction, which he can do as long as he is not close to the top. Imagine a blindfolded man who wants to climb to the top of a hill with the fewest steps along the way as possible. Known as the slope of a function in mathematical terms, the gradient simply measures the change in all weights with regard to the change in error. In machine learning, a gradient is a derivative of a function that has more than one input variable.

In mathematical terms, a gradient is a partial derivative with respect to its inputs. But if the slope is zero, the model stops learning. The higher the gradient, the steeper the slope and the faster a model can learn. You can also think of a gradient as the slope of a function. "A gradient measures how much the output of a function changes if you change the inputs a little bit." - Lex Fridman (MIT)Ī gradient simply measures the change in all weights with regard to the change in error.

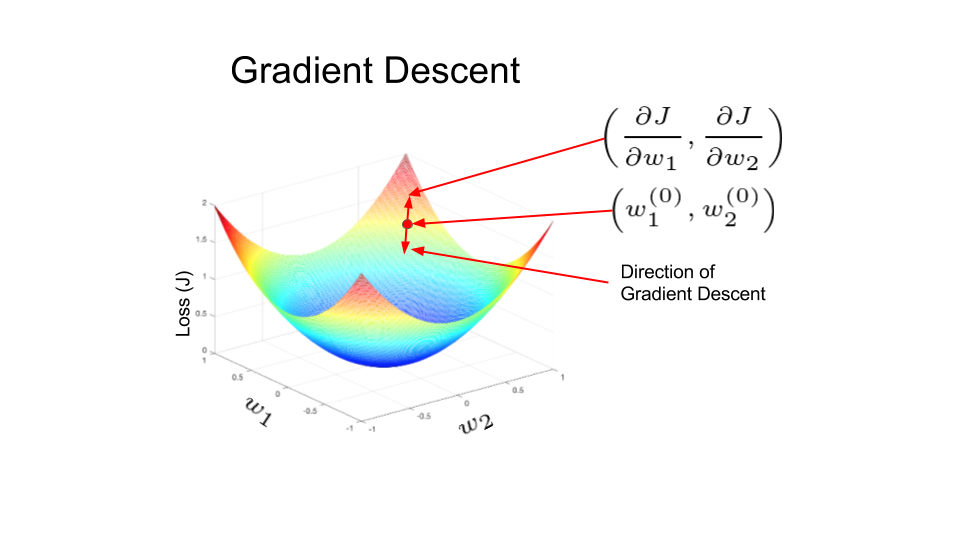

To understand this concept fully, it ’s important to know about gradients. You start by defining the initial parameter ’s values and from there gradient descent uses calculus to iteratively adjust the values so they minimize the given cost-function. Gradient descent is simply used in machine learning to find the values of a function's parameters (coefficients) that minimize a cost function as far as possible. Gradient Descent is an optimization algorithm for finding a local minimum of a differentiable function.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed